34 KiB

Pose2Sim

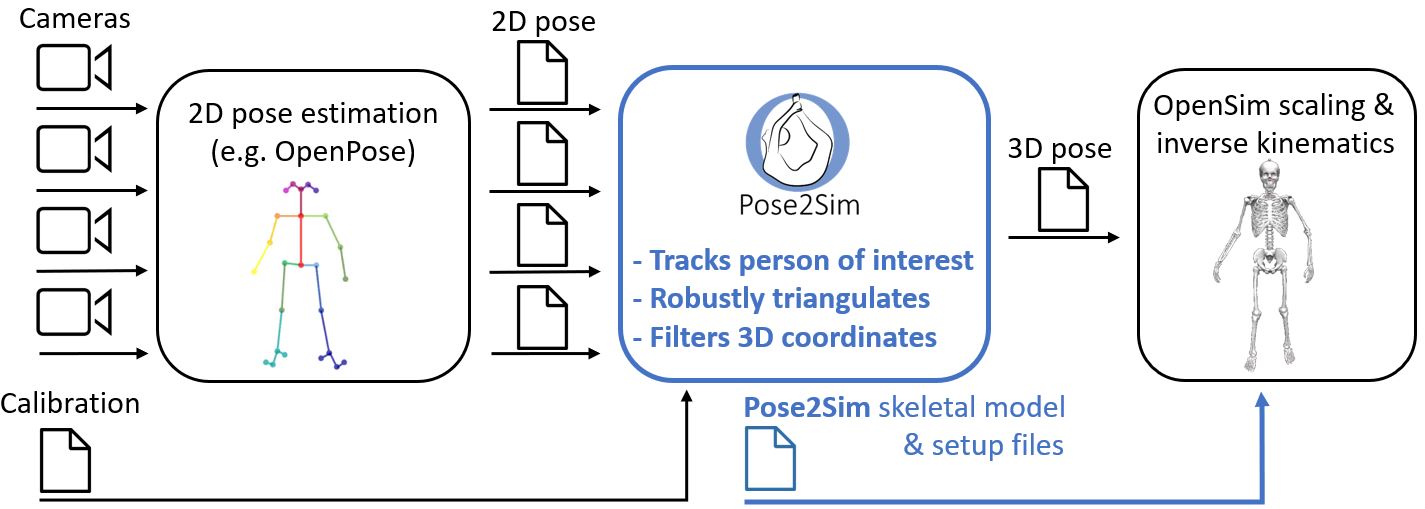

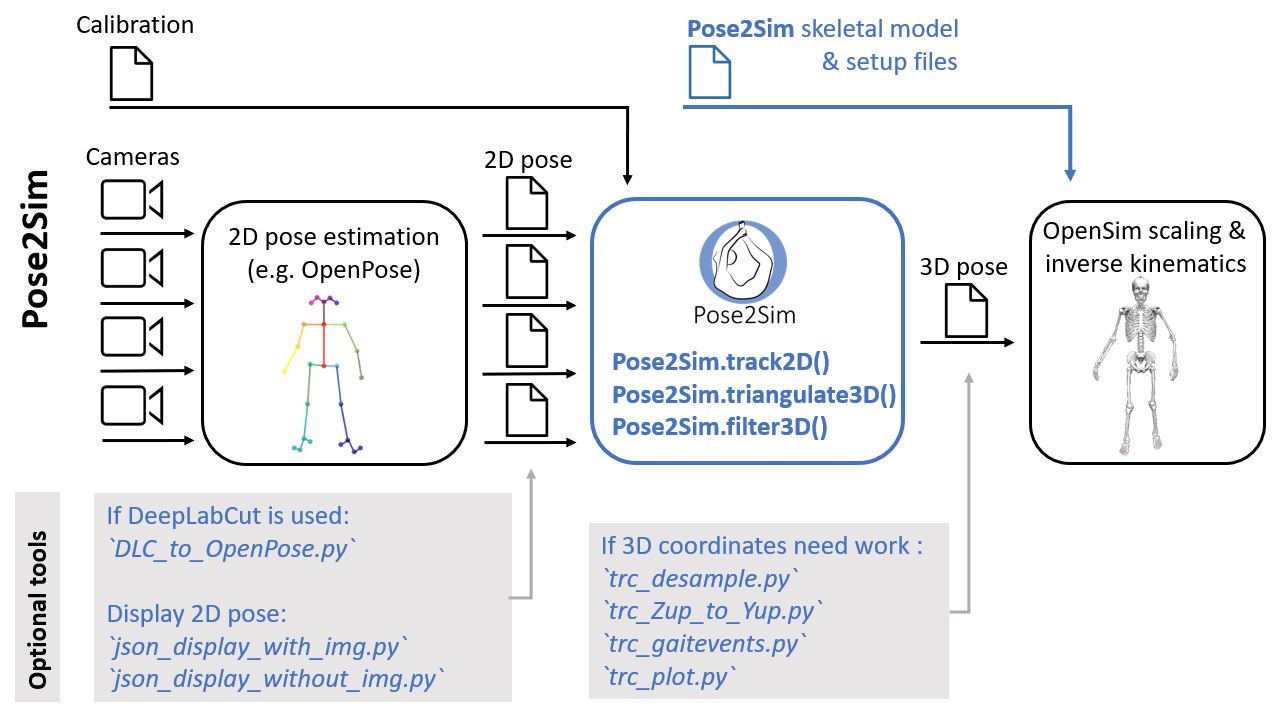

Pose2Sim provides a workflow for 3D markerless kinematics, as an alternative to the more usual marker-based motion capture methods.

Pose2Sim stands for "OpenPose to OpenSim", as it uses OpenPose inputs (2D keypoints coordinates obtained from multiple videos) and leads to an OpenSim result (full-body 3D joint angles). Other 2D solutions can alternatively be used as inputs.

If you can only use a single camera and don't mind losing some accuracy, please consider using Sports2D.

News:

Version 0.4 released: Easier and better calibration procedure.

To upgrade, typepip install pose2sim --upgrade. You will need to update your Config.toml file.

N.B.: Still looking for contributors (see How to contribute)

Contents

- Installation and Demonstration

- Use on your own data

- Utilities

- How to cite and how to contribute

Installation and Demonstration

Installation

-

Install OpenPose (instructions there).

Windows portable demo is enough. -

Install OpenSim 4.x (there).

Tested up to v4.4-beta on Windows. Has to be compiled from source on Linux (see there). -

Optional. Install Anaconda or Miniconda.

Open an Anaconda terminal and create a virtual environment with typing:conda create -n Pose2Sim python=3.7 conda activate Pose2Sim

-

Install Pose2Sim:

If you don't use Anaconda, typepython -Vin terminal to make sure python>=3.6 is installed.-

OPTION 1: Quick install: Open a terminal.

pip install pose2sim -

OPTION 2: Build from source and test the last changes: Open a terminal in the directory of your choice and Clone the Pose2Sim repository.

git clone https://github.com/perfanalytics/pose2sim.git cd pose2sim pip install .

-

Demonstration Part-1: Build 3D TRC file on Python

This demonstration provides an example experiment of a person balancing on a beam, filmed with 4 calibrated cameras processed with OpenPose.

Open a terminal, enter pip show pose2sim, report package location.

Copy this path and go to the Demo folder with cd <path>\pose2sim\Demo.

Type ipython, and test the following code:

from Pose2Sim import Pose2Sim

Pose2Sim.calibration()

Pose2Sim.personAssociation()

Pose2Sim.triangulation()

Pose2Sim.filtering()

You should obtain a plot of all the 3D coordinates trajectories. You can check the logs inDemo\Users\logs.txt.

Results are stored as .trc files in the Demo/pose-3d directory.

N.B.: Default parameters have been provided in Demo\Users\Config.toml but can be edited.

Try calibration tool by changing calibration_type to calculate instead of convert (more info there).

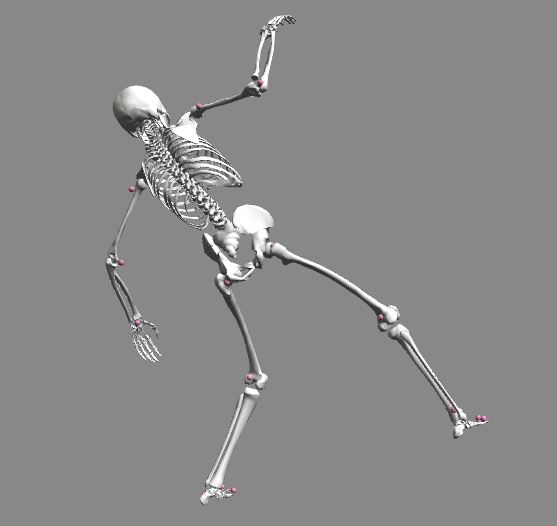

Demonstration Part-2: Obtain 3D joint angles with OpenSim

In the same vein as you would do with marker-based kinematics, start with scaling your model, and then perform inverse kinematics.

Scaling

- Open OpenSim.

- Open the provided

Model_Pose2Sim_Body25b.osimmodel frompose2sim/Demo/opensim. (File -> Open Model) - Load the provided

Scaling_Setup_Pose2Sim_Body25b.xmlscaling file frompose2sim/Demo/opensim. (Tools -> Scale model -> Load) - Run. You should see your skeletal model take the static pose.

Inverse kinematics

- Load the provided

IK_Setup_Pose2Sim_Body25b.xmlscaling file frompose2sim/Demo/opensim. (Tools -> Inverse kinematics -> Load) - Run. You should see your skeletal model move in the Vizualizer window.

Use on your own data

Deeper explanations and instructions are given below.

Prepare for running on your own data

Get ready.

-

Find your

Pose2Sim\Empty_project, copy-paste it where you like and give it the name of your choice. -

Edit the

User\Config.tomlfile as needed, especially regarding the path to your project. -

Populate the

raw-2dfolder with your videos.Project │ ├──opensim │ ├──Geometry │ ├──Model_Pose2Sim_Body25b.osim │ ├──Scaling_Setup_Pose2Sim_Body25b.xml │ └──IK_Setup_Pose2Sim_Body25b.xml │ ├── raw │ ├──vid_cam1.mp4 (or other extension) │ ├──... │ └──vid_camN.mp4 │ └──User └──Config.toml

2D pose estimation

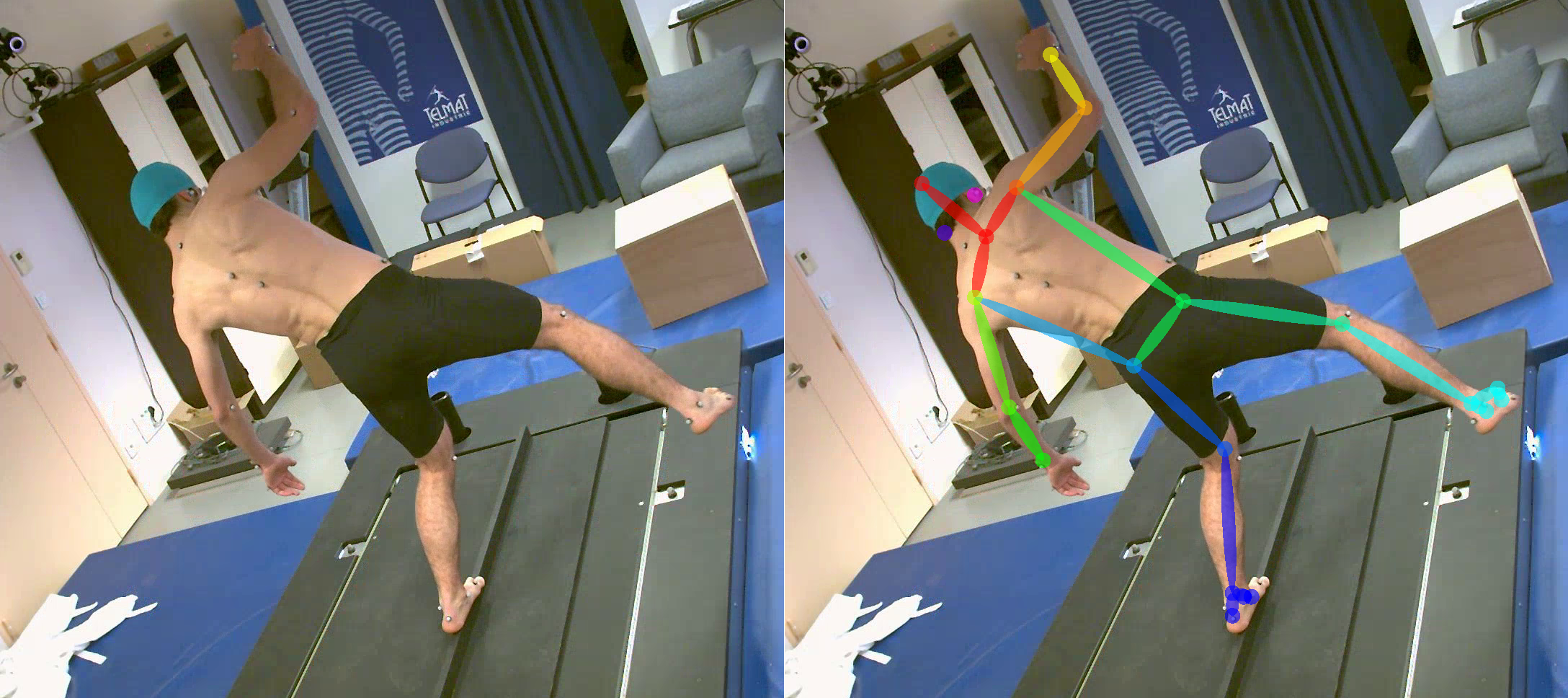

Estimate 2D pose from images with Openpose or an other pose estimation solution.

N.B.: First film a short static pose that will be used for scaling the OpenSim model (A-pose for example), and then film your motions of interest N.B.: Note that the names of your camera folders must follow the same order as in the calibration file, and end with '_json'.

With OpenPose:

The accuracy and robustness of Pose2Sim have been thoroughly assessed only with OpenPose, and especially with the BODY_25B model. Consequently, we recommend using this 2D pose estimation solution. See OpenPose repository for installation and running.

- Open a command prompt in your OpenPose directory.

Launch OpenPose for each raw image folder:bin\OpenPoseDemo.exe --model_pose BODY_25B --video <PATH_TO_PROJECT_DIR>\raw-2d\vid_cam1.mp4 --write_json <PATH_TO_PROJECT_DIR>\pose-2d\pose_cam1_json - The BODY_25B model has more accurate results than the standard BODY_25 one and has been extensively tested for Pose2Sim.

You can also use the BODY_135 model, which allows for the evaluation of pronation/supination, wrist flexion, and wrist deviation.

All other OpenPose models (BODY_25, COCO, MPII) are also supported.

Make sure you modify theUser\Config.tomlfile accordingly. - Use one of the

json_display_with_img.pyorjson_display_with_img.pyscripts (see Utilities) if you want to display 2D pose detections.

N.B.: OpenPose BODY_25B is the default 2D pose estimation model used in Pose2Sim. However, other skeleton models from other 2D pose estimation solutions can be used alternatively.

- You will first need to convert your 2D detection files to the OpenPose format (see Utilities).

- Then, change the pose_model in the User\Config.toml file. You may also need to choose a different tracked_keypoint if the Neck is not detected by the chosen model.

- Finally, use the corresponding OpenSim model and setup files, which are provided in the Empty_project\opensim folder.

Available models are:

- OpenPose BODY_25B, BODY_25, BODY_135, COCO, MPII

- Mediapipe BLAZEPOSE

- DEEPLABCUT

- AlphaPose HALPE_26, HALPE_68, HALPE_136, COCO_133, COCO, MPII

With MediaPipe:

Mediapipe BlazePose is very fast, fully runs under Python, handles upside-down postures and wrist movements (but no subtalar ankle angles).

However, it is less robust and accurate than OpenPose, and can only detect a single person.

- Use the script

Blazepose_runsave.py(see Utilities) to run BlazePose under Python, and store the detected coordinates in OpenPose (json) or DeepLabCut (h5 or csv) format:

Type inpython -m Blazepose_runsave -i r"<input_file>" -dJspython -m Blazepose_runsave -hfor explanation on parameters and for additional ones. - Make sure you change the

pose_modeland thetracked_keypointin theUser\Config.tomlfile.

With DeepLabCut:

If you need to detect specific points on a human being, an animal, or an object, you can also train your own model with DeepLabCut.

- Train your DeepLabCut model and run it on your images or videos (more instruction on their repository)

- Translate the h5 2D coordinates to json files (with

DLC_to_OpenPose.pyscript, see Utilities):python -m DLC_to_OpenPose -i r"<input_h5_file>" - Report the model keypoints in the 'skeleton.py' file, and make sure you change the

pose_modeland thetracked_keypointin theUser\Config.tomlfile. - Create an OpenSim model if you need 3D joint angles.

With AlphaPose:

AlphaPose is one of the main competitors of OpenPose, and its accuracy is comparable. As a top-down approach (unlike OpenPose which is bottom-up), it is faster on single-person detection, but slower on multi-person detection.

- Install and run AlphaPose on your videos (more instruction on their repository)

- Translate the AlphaPose single json file to OpenPose frame-by-frame files (with

AlphaPose_to_OpenPose.pyscript, see Utilities):python -m AlphaPose_to_OpenPose -i r"<input_alphapose_json_file>" - Make sure you change the

pose_modeland thetracked_keypointin theUser\Config.tomlfile.

N.B.: Markers are not needed in Pose2Sim and were used here for validation

The project hierarchy becomes: (CLICK TO SHOW)

Project

│

├──opensim

│ ├──Geometry

│ ├──Model_Pose2Sim_Body25b.osim

│ ├──Scaling_Setup_Pose2Sim_Body25b.xml

│ └──IK_Setup_Pose2Sim_Body25b.xml

│

├──pose-2d

│ ├──pose_cam1_json

│ ├──...

│ └──pose_camN_json

│

├── raw-2d

│ ├──vid_cam1.mp4

│ ├──...

│ └──vid_camN.mp4

│

└──User

└──Config.toml

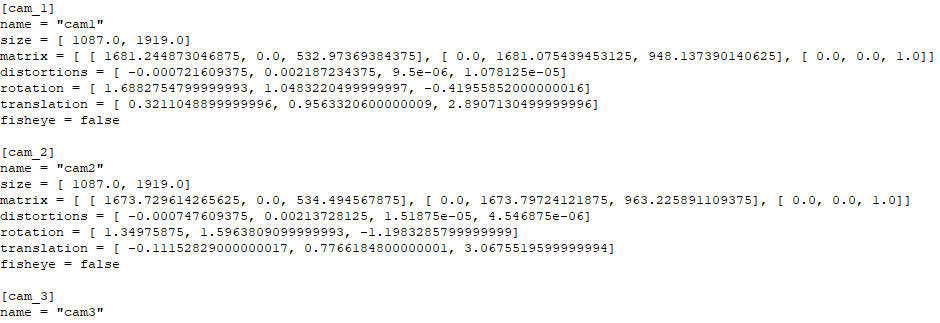

Camera calibration

Convert a preexisting calibration file, or calculate intrinsic and extrinsic parameters from scratch.

Intrinsic parameters: camera properties (focal length, optical center, distortion), usually need to be calculated only once in their lifetime

Extrinsic parameters: camera placement in space (position and orientation), need to be calculated every time a camera is moved

Convert file

If you already have a calibration file, set calibration_type type to 'convert' in your Config.toml file.

- From Qualisys:

- Export calibration to

.qca.txtwithin QTM - Copy it in the

calibrationfolder - set

convert_fromto 'qualisys' in yourConfig.tomlfile. Changebinning_factorto 2 if you film in 540p

- Export calibration to

- From Optitrack: Exporting calibration will be available in Motive 3.2. In the meantime:

- Calculate intrinsics with a board (see next section)

- Use their C++ API to retrieve extrinsic properties. Translation can be copied as is in your

Calib.tomlfile, but TT_CameraOrientationMatrix first needs to be converted to a Rodrigues vector with OpenCV.

- From Vicon:

- Not possible yet. Want to contribute?

Calculate from scratch

-

With a board:

-

Calculate intrinsic parameters:

-

Create a folder for each camera in your

calibration\intrinsicsfolder. -

For each camera, film a checkerboard or a charucoboard. Either the board or the camera can be moved.

-

Adjust parameters in the

Config.tomlfile. -

Make sure that the board:

is filmed from different angles, covers a large part of the video frame, and is in focus.

is flat, without reflections, surrounded by a white border, that it is not rotationally invariant (Nrows ≠ Ncols, and Nrows odd if Ncols even).N.B.: If you already calculated intrinsic parameters earlier, you can skip this step. Create a Calib*.toml file following the same model as earlier, and copy there your intrinsic parameters (extrinsic parameters can be randomly filled).

-

-

Calculate extrinsic parameters:

- Create a folder for each camera in your

calibration\extrinsicsfolder. - Once your cameras are in place, shortly film a board laid on the floor or the raw scene

(only one frame is needed, but do not just take one single photo unless you are sure it does not change the image format). - Adjust parameters in the

Config.tomlfile. - If you film a board:

Make sure that it is seen by all cameras.

It should preferably be larger than the one used for intrinsics, as results will not be very accurate out of the covered zone. - If you film the raw scene (potentially more accurate):

Manually measure the 3D coordinates of 10 or more points in the scene (tiles, lines on wall, treadmill, etc). They should cover as large of a space as possible.

Then you will click on the corresponding image points for each view.

- Create a folder for each camera in your

-

-

With points:

- Points can be detected from a wand.

Want to contribute? - For a more automatic calibration, OpenPose keypoints could also be used for calibration.

Want to contribute?

- Points can be detected from a wand.

Open an Anaconda prompt or a terminal, type ipython.

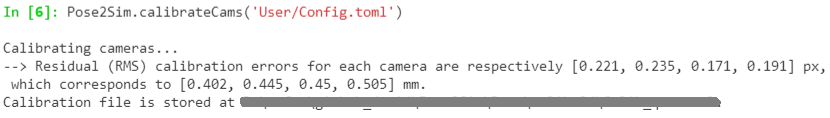

By default, calibration() will look for Config.toml in the User folder of your current directory. If you want to store it somewhere else (e.g. in your data directory), specify this path as an argument: Pose2Sim.calibration(r'path_to_config.toml').

from Pose2Sim import Pose2Sim

Pose2Sim.calibration()

The project hierarchy becomes: (CLICK TO SHOW)

Project

│

├──calibration

│ ├──intrinsics

│ │ ├──int_cam1_img

│ │ ├──...

│ │ └──int_camN_img

│ ├──extrinsics

│ │ ├──ext_cam1_img

│ │ ├──...

│ │ └──ext_camN_img

│ └──Calib.toml

│

├──opensim

│ ├──Geometry

│ ├──Model_Pose2Sim_Body25b.osim

│ ├──Scaling_Setup_Pose2Sim_Body25b.xml

│ └──IK_Setup_Pose2Sim_Body25b.xml

│

├──pose-2d

│ ├──pose_cam1_json

│ ├──...

│ └──pose_camN_json

│

├── raw-2d

│ ├──vid_cam1.mp4

│ ├──...

│ └──vid_camN.mp4

│

└──User

└──Config.toml

Camera synchronization

Cameras need to be synchronized, so that 2D points correspond to the same position across cameras.\ N.B.: Skip this step if your cameras are already synchronized.

If your cameras are not natively synchronized, you can use this script.

Tracking, Triangulating, Filtering

Associate persons across cameras

Track the person viewed by the most cameras, in case of several detections by OpenPose.

N.B.: Skip this step if only one person is in the field of view.

Want to contribute? Allow for multiple person analysis.

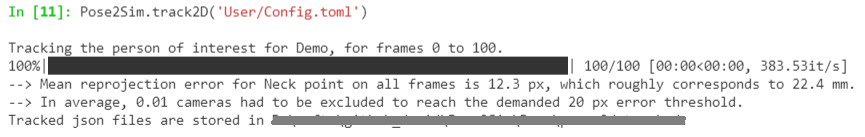

Open an Anaconda prompt or a terminal, type ipython.

By default, personAssociation() will look for Config.toml in the User folder of your current directory. If you want to store it somewhere else (e.g. in your data directory), specify this path as an argument: Pose2Sim.personAssociation(r'path_to_config.toml').

from Pose2Sim import Pose2Sim

Pose2Sim.personAssociation()

Check printed output. If results are not satisfying, try and release the constraints in the Config.toml file.

The project hierarchy becomes: (CLICK TO SHOW)

Project

│

├──calibration

│ ├──intrinsics

│ │ ├──int_cam1_img

│ │ ├──...

│ │ └──int_camN_img

│ ├──extrinsics

│ │ ├──ext_cam1_img

│ │ ├──...

│ │ └──ext_camN_img

│ └──Calib.toml

│

├──opensim

│ ├──Geometry

│ ├──Model_Pose2Sim_Body25b.osim

│ ├──Scaling_Setup_Pose2Sim_Body25b.xml

│ └──IK_Setup_Pose2Sim_Body25b.xml

│

├──pose-2d

│ ├──pose_cam1_json

│ ├──...

│ └──pose_camN_json

│

├──pose-2d-tracked

│ ├──tracked_cam1_json

│ ├──...

│ └──tracked_camN_json

│

├── raw-2d

│ ├──vid_cam1.mp4

│ ├──...

│ └──vid_camN.mp4

│

└──User

└──Config.toml

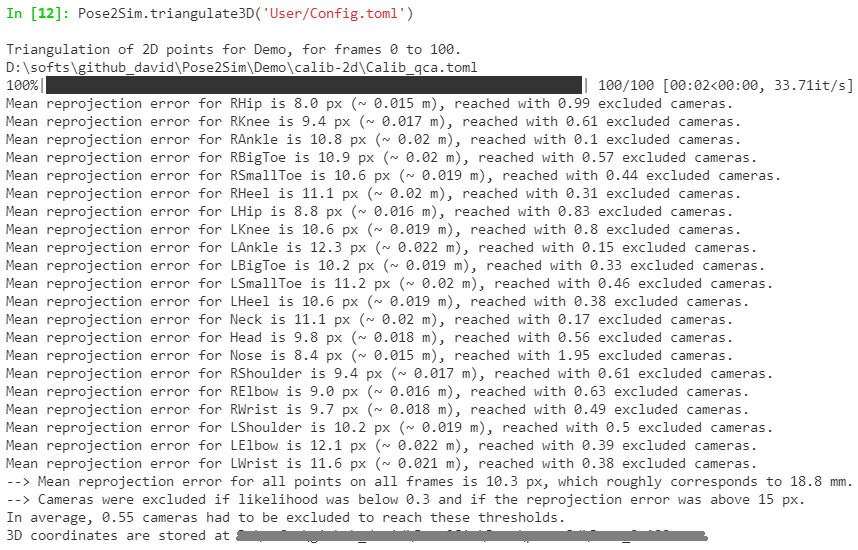

Triangulating keypoints

Triangulate your 2D coordinates in a robust way.

Open an Anaconda prompt or a terminal, type ipython.

By default, triangulation() will look for Config.toml in the User folder of your current directory. If you want to store it somewhere else (e.g. in your data directory), specify this path as an argument: Pose2Sim.triangulation(r'path_to_config.toml').

from Pose2Sim import Pose2Sim

Pose2Sim.triangulation()

Check printed output, and vizualise your trc in OpenSim: File -> Preview experimental data.

If your triangulation is not satisfying, try and release the constraints in the Config.toml file.

The project hierarchy becomes: (CLICK TO SHOW)

Project

│

├──calibration

│ ├──intrinsics

│ │ ├──int_cam1_img

│ │ ├──...

│ │ └──int_camN_img

│ ├──extrinsics

│ │ ├──ext_cam1_img

│ │ ├──...

│ │ └──ext_camN_img

│ └──Calib.toml

│

├──opensim

│ ├──Geometry

│ ├──Model_Pose2Sim_Body25b.osim

│ ├──Scaling_Setup_Pose2Sim_Body25b.xml

│ └──IK_Setup_Pose2Sim_Body25b.xml

│

├──pose-2d

│ ├──pose_cam1_json

│ ├──...

│ └──pose_camN_json

│

├──pose-2d-tracked

│ ├──tracked_cam1_json

│ ├──...

│ └──tracked_camN_json

│

├──pose-3d

└──Pose-3d.trc>

│

├── raw-2d

│ ├──vid_cam1.mp4

│ ├──...

│ └──vid_camN.mp4

│

└──User

└──Config.toml

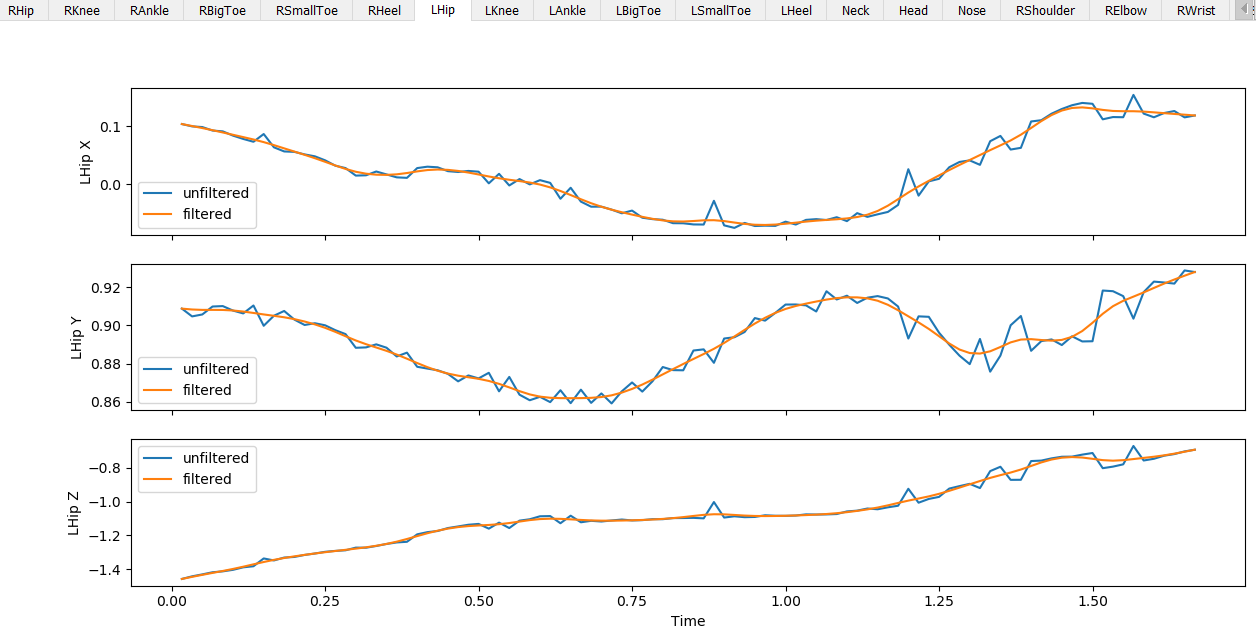

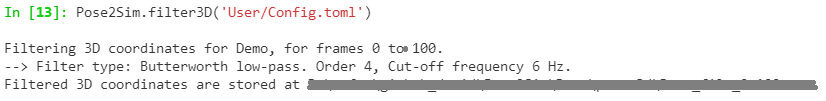

Filtering 3D coordinates

Filter your 3D coordinates.

Open an Anaconda prompt or a terminal, type ipython.

By default, filtering() will look for Config.toml in the User folder of your current directory. If you want to store it somewhere else (e.g. in your data directory), specify this path as an argument: Pose2Sim.filtering(r'path_to_config.toml').

from Pose2Sim import Pose2Sim

Pose2Sim.filtering()

Check your filtration with the displayed figures, and vizualise your trc in OpenSim. If your filtering is not satisfying, try and change the parameters in the Config.toml file.

The project hierarchy becomes: (CLICK TO SHOW)

Project

│

├──calibration

│ ├──intrinsics

│ │ ├──int_cam1_img

│ │ ├──...

│ │ └──int_camN_img

│ ├──extrinsics

│ │ ├──ext_cam1_img

│ │ ├──...

│ │ └──ext_camN_img

│ └──Calib.toml

│

├──opensim

│ ├──Geometry

│ ├──Model_Pose2Sim_Body25b.osim

│ ├──Scaling_Setup_Pose2Sim_Body25b.xml

│ └──IK_Setup_Pose2Sim_Body25b.xml

│

├──pose-2d

│ ├──pose_cam1_json

│ ├──...

│ └──pose_camN_json

│

├──pose-2d-tracked

│ ├──tracked_cam1_json

│ ├──...

│ └──tracked_camN_json

│

├──pose-3d

│ ├──Pose-3d.trc

│ └──Pose-3d-filtered.trc

│

├── raw-2d

│ ├──vid_cam1.mp4

│ ├──...

│ └──vid_camN.mp4

│

└──User

└──Config.toml

OpenSim kinematics

Obtain 3D joint angles.

Scaling

- Use the previous steps to capture a static pose, typically an A-pose or a T-pose.

- Open OpenSim.

- Open the provided

Model_Pose2Sim_Body25b.osimmodel frompose2sim/Empty_project/opensim. (File -> Open Model) - Load the provided

Scaling_Setup_Pose2Sim_Body25b.xmlscaling file frompose2sim/Empty_project/opensim. (Tools -> Scale model -> Load) - Replace the example static .trc file with your own data.

- Run

- Save the new scaled OpenSim model.

Inverse kinematics

- Use Pose2Sim to generate 3D trajectories.

- Open OpenSim.

- Load the provided

IK_Setup_Pose2Sim_Body25b.xmlscaling file frompose2sim/Empty_project/opensim. (Tools -> Inverse kinematics -> Load) - Replace the example .trc file with your own data, and specify the path to your angle kinematics output file.

- Run

- Motion results will appear as .mot file in the

pose2sim/Empty_project/opensimdirectory (automatically saved).

Command line

Alternatively, you can use command-line tools:

-

Open an Anaconda terminal in your OpenSim/bin directory, typically

C:\OpenSim <Version>\bin.

You'll need to adjust thetime_range,output_motion_file, and enter the full paths to the input and output.osim,.trc, and.motfiles in your setup file.opensim-cmd run-tool <PATH TO YOUR SCALING OR IK SETUP FILE>.xml -

You can also run OpenSim directly in Python:

import subprocess subprocess.call(["opensim-cmd", "run-tool", r"<PATH TO YOUR SCALING OR IK SETUP FILE>.xml"]) -

Or take advantage of the full the OpenSim Python API. See there for installation instructions.

Note that it is easier to install on Python 3.7 and with OpenSim 4.2.

The project hierarchy becomes: (CLICK TO SHOW)

Project

│

├──calibration

│ ├──intrinsics

│ │ ├──int_cam1_img

│ │ ├──...

│ │ └──int_camN_img

│ ├──extrinsics

│ │ ├──ext_cam1_img

│ │ ├──...

│ │ └──ext_camN_img

│ └──Calib.toml

│

├──opensim

│ ├──Geometry

│ ├──Model_Pose2Sim_Body25b.osim

│ ├──Scaling_Setup_Pose2Sim_Body25b.xml

│ ├──Model_Pose2Sim_Body25b_Scaled.osim

│ ├──IK_Setup_Pose2Sim_Body25b.xml

│ └──IK_result.mot

│

├──pose

│ ├──pose_cam1_json

│ ├──...

│ └──pose_camN_json

│

├──pose-associated

│ ├──tracked_cam1_json

│ ├──...

│ └──tracked_camN_json

│

├──triangulation

│ ├──triangulation.trc

│ └──triangulation-filtered.trc

│

├── raw

│ ├──vid_cam1.mp4

│ ├──...

│ └──vid_camN.mp4

│

└──User

└──Config.toml

Utilities

A list of standalone tools (see Utilities), which can be either run as scripts, or imported as functions. Check usage in the docstrings of each Python file. The figure below shows how some of these toolscan be used to further extend Pose2Sim usage.

Converting files and Calibrating (CLICK TO SHOW)

`Blazepose_runsave.py` Runs BlazePose on a video, and saves coordinates in OpenPose (json) or DeepLabCut (h5 or csv) format.

DLC_to_OpenPose.pyConverts a DeepLabCut (h5) 2D pose estimation file into OpenPose (json) files.

c3d_to_trc.pyConverts 3D point data of a .c3d file to a .trc file compatible with OpenSim. No analog data (force plates, emg) nor computed data (angles, powers, etc) are retrieved.

calib_from_checkerboard.pyCalibrates cameras with images or a video of a checkerboard, saves calibration in a Pose2Sim .toml calibration file.

calib_qca_to_toml.pyConverts a Qualisys .qca.txt calibration file to the Pose2Sim .toml calibration file (similar to what is used in AniPose).

calib_toml_to_qca.pyConverts a Pose2Sim .toml calibration file (e.g., from a checkerboard) to a Qualisys .qca.txt calibration file.

calib_yml_to_toml.pyConverts OpenCV intrinsic and extrinsic .yml calibration files to an OpenCV .toml calibration file.

calib_toml_to_yml.pyConverts an OpenCV .toml calibration file to OpenCV intrinsic and extrinsic .yml calibration files.

Plotting tools (CLICK TO SHOW)

json_display_with_img.pyOverlays 2D detected json coordinates on original raw images. High confidence keypoints are green, low confidence ones are red.

json_display_without_img.pyPlots an animation of 2D detected json coordinates.

trc_plot.pyDisplays X, Y, Z coordinates of each 3D keypoint of a TRC file in a different matplotlib tab.

Other trc tools (CLICK TO SHOW)

trc_desample.pyUndersamples a trc file.

trc_Zup_to_Yup.pyChanges Z-up system coordinates to Y-up system coordinates.

trc_filter.pyFilters trc files. Available filters: Butterworth, Butterworth on speed, Gaussian, LOESS, Median.

trc_gaitevents.pyDetects gait events from point coordinates according to Zeni et al. (2008).

trc_combine.pyCombine two trc files, for example a triangulated DeepLabCut trc file and a triangulated OpenPose trc file.

How to cite and how to contribute

How to cite

If you use this code or data, please cite Pagnon et al., 2022b, Pagnon et al., 2022a, or Pagnon et al., 2021.

@Article{Pagnon_2022_JOSS,

AUTHOR = {Pagnon, David and Domalain, Mathieu and Reveret, Lionel},

TITLE = {Pose2Sim: An open-source Python package for multiview markerless kinematics},

JOURNAL = {Journal of Open Source Software},

YEAR = {2022},

DOI = {10.21105/joss.04362},

URL = {https://joss.theoj.org/papers/10.21105/joss.04362}

}

@Article{Pagnon_2022_Accuracy,

AUTHOR = {Pagnon, David and Domalain, Mathieu and Reveret, Lionel},

TITLE = {Pose2Sim: An End-to-End Workflow for 3D Markerless Sports Kinematics—Part 2: Accuracy},

JOURNAL = {Sensors},

YEAR = {2022},

DOI = {10.3390/s22072712},

URL = {https://www.mdpi.com/1424-8220/22/7/2712}

}

@Article{Pagnon_2021_Robustness,

AUTHOR = {Pagnon, David and Domalain, Mathieu and Reveret, Lionel},

TITLE = {Pose2Sim: An End-to-End Workflow for 3D Markerless Sports Kinematics—Part 1: Robustness},

JOURNAL = {Sensors},

YEAR = {2021},

DOI = {10.3390/s21196530},

URL = {https://www.mdpi.com/1424-8220/21/19/6530}

}

How to contribute

I would happily welcome any proposal for new features, code improvement, and more!

If you want to contribute to Pose2Sim, please follow this guide on how to fork, modify and push code, and submit a pull request. I would appreciate it if you provided as much useful information as possible about how you modified the code, and a rationale for why you're making this pull request. Please also specify on which operating system and on which python version you have tested the code.

Here is a to-do list, for general guidance purposes only:

pose: Support Mediapipe holistic for pronation/supination calibration: Calculate calibration with points rather than board. (1) SBA calibration with wand (cf Argus, see converter here), or (2) with OpenPose keypoints. Set world reference frame in the end. synchronization: Synchronize cameras on 2D keypoint speeds. personAssociation: Multiple persons association. See Dong 2021. With a neural network instead of brute force? triangulation: Multiple persons kinematics (output multiple .trc coordinates files). GUI: Blender add-on, or webapp (e.g., with Napari). See Maya-Mocap and BlendOSim. Tutorials: Make video tutorials. Doc: Use Sphinx or MkDocs for clearer documentation.

Catch errors Conda package and Docker image Copy-paste muscles from OpenSim lifting full-body model for inverse dynamics and more Implement optimal fixed-interval Kalman smoothing for inverse kinematics (Biorbd or OpenSim fork)

Undistort 2D points before triangulating (and distort them before computing reprojection error). Offer the possibility of triangulating with Sparse Bundle Adjustment (SBA), Extended Kalman Filter (EKF), Full Trajectory Estimation (FTE) (see AcinoSet). Implement SLEAP as an other 2D pose estimation solution (converter, skeleton.py, OpenSim model and setup files). Outlier rejection (sliding z-score?) Also solve limb swapping Implement normalized DLT and RANSAC triangulation, as well as a triangulation refinement step (cf DOI:10.1109/TMM.2022.3171102) Utilities: convert Vicon xcp calibration file to toml Run from command line via click or typer